Most brands A/B test the same way.

They split a subject line, check which one got more opens, declare a winner, and move on.

Maybe they do it once a month. Maybe less. And they wonder why nothing ever meaningfully changes in their results.

The problem isn't that they're testing. It's that they're testing without a system, which means the data they're collecting rarely connects to anything that actually moves the needle.

We've seen this across enough accounts to say with confidence: unstructured testing is costing most brands more than they realize.

The decisions being made off bad data compound quietly over months and the gap between what your email program could be doing and what it's actually doing gets wider without anyone noticing.

What To Do Instead

Once or twice a month, we run what we call an “HIT”.

High Impact Test.

The name matters because the filter matters.

Before we test anything, we ask one question, does this connect to our quarterly and monthly objectives for this account?

If the answer is no, we don't test it.

There are infinite things you could test inside Klaviyo.

The ones worth running are the ones tied to something you're actively trying to move.

Once we've identified the right thing to test, we build it out across multiple sends over a longer duration.

Not one campaign, one split, one result. Multiple sends, accumulated data, actual statistical significance.

That's the part most brands skip. They run a test on a single email to a small segment and make decisions off a sample size that would make a statistician wince.

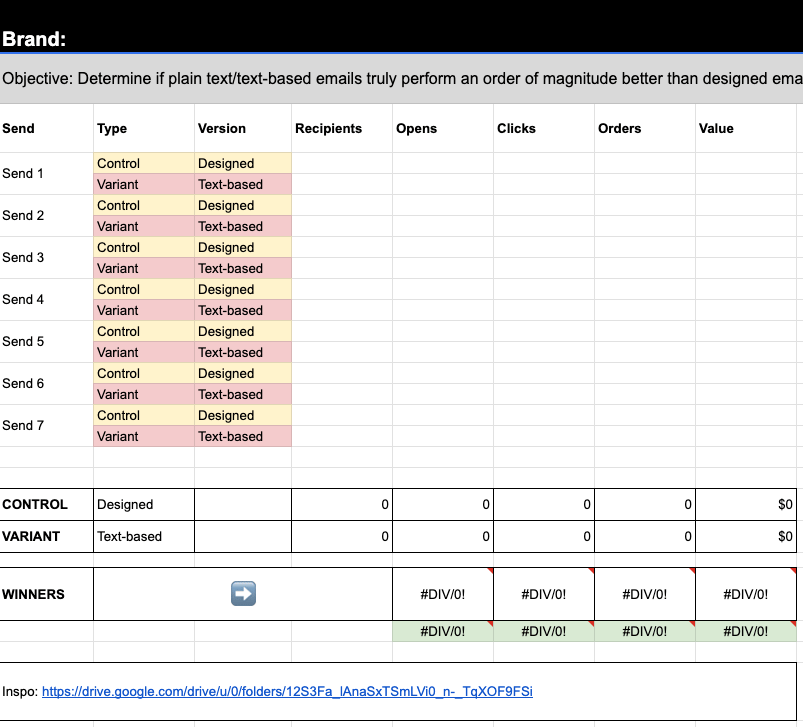

The Sheet

Every test we run lives in a single Google Sheet. One sheet per client, all tests stacked inside it.

Control versus variant. What we normally do versus what we're testing against. Every campaign that's part of the test gets linked directly inside the sheet so anyone on the team can pull the source data without hunting through Klaviyo.

Then we plug in the raw numbers. Recipients, opens, clicks, orders, revenue. The sheet does the rest.

What it spits out is a value for each version across every metric. Not just which one got more opens. Which one actually made more money across everyone who received it.

That distinction matters more than most people realize. An email can win on opens and lose on revenue.

An email can have average click rates and still generate significantly more orders. Looking at any single metric in isolation will point you in the wrong direction half the time.

Here’s what it looks like:

How This Works In Practice

We ran a timing test recently for one of our clients, Small Pet Select.

Control was their standard send time.

Variant was a different window we wanted to test against it.

We ran it across several sends, linked each campaign in the sheet, and started plugging in the data.

The results were not close.

Our existing send time was winning across every single metric. Opens, clicks, orders, and total revenue. The variant was down double digits in almost every KPI.

Sometimes tests come back murky.

Close results, wins on some metrics and losses on others, no clear signal either way.

This was not that. This was a clean, decisive result that we could take into our Monday call with the client and say with confidence, we tested this, here's the data, here's what it means, here's what we're doing next.

That's what a real A/B test looks like.

Not a vibe. Not a gut feeling.

A structured process that produces a number you can actually make a decision from.

The Three Things That Make This Work

1️⃣ The filter. Only test things connected to a real objective. Random testing produces random insight.

2️⃣ The duration. Run tests across multiple sends until the data is significant enough to trust. One campaign is rarely enough.

3️⃣ The full picture. Look at revenue per recipient, not just opens. The metric that matters is the one closest to money.

What You Can Do This Week

Pick one thing you've been assuming works without ever actually testing it. Send time, plain text versus designed, discount versus no discount, etc. One thing.

Build a simple tracking sheet. Control versus variant. Log recipients, opens, clicks, orders, and revenue for each send. Run it across at least three campaigns before you call a winner.

Then make a decision based on the data, not the dashboard number that felt good in the moment.

Thats the whole system. It's not complicated. It's just consistent.

If you want the actual template we use to track this across all our client accounts, click the link below and make a copy.

If you want a second pair of eyes on what's worth testing in your account right now, reply with the word TEST and I'll tell you exactly where I'd start.

— Anthony